We’ve been talking about artificial intelligence (AI), drones and machine learning for a while now. However, do you feel that sometimes it’s just a bunch of fancy words flying around?

In this article, I would like to introduce three basic machine learning concepts for those who love drones. No matter why you love flying drones, or how you like to use drone data, these concepts are great starting points.

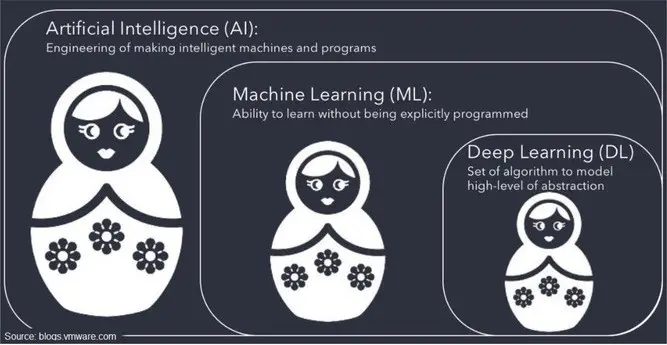

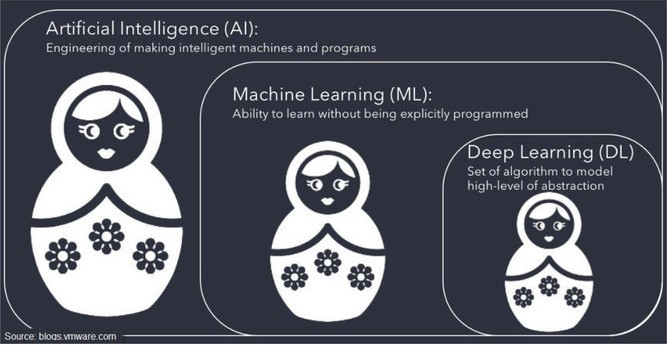

The relationship between AI, ML, & DL

There are tons of definitions online about AI, machine learning (ML), and deep learning (DL). Sometimes they are interchangeable, sometimes they are not.

The easiest way to understand the relationship between them is to compare them with Matryoshka dolls or nesting dolls.

Machine learning Drones (ML) vs Deep learning (DL)

Now that we know the relationship between DL and ML, it is incorrect to say that DL is different from ML. It’s just ML has something more than DL.

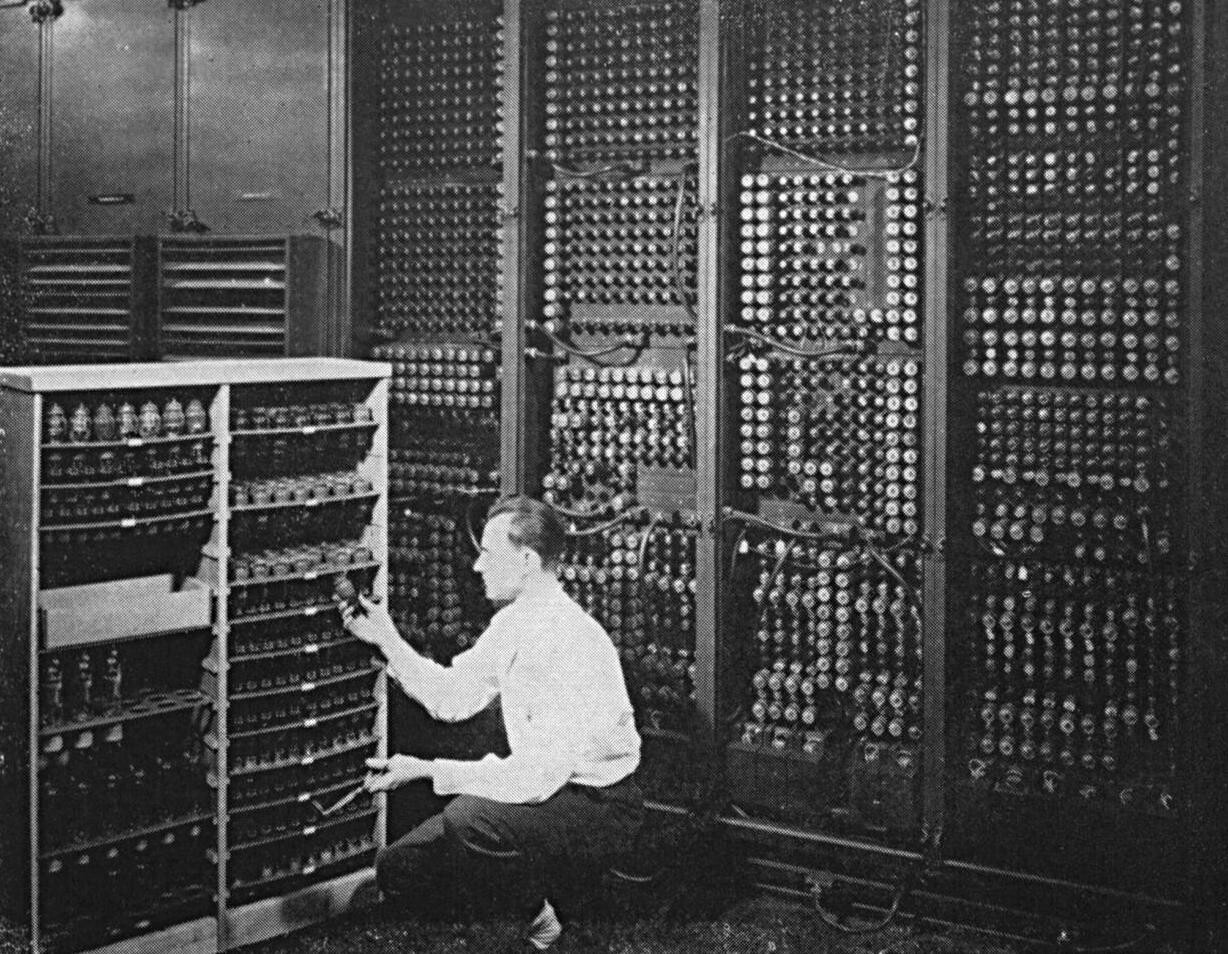

However, ML is not necessarily better than DL. Thinking about the history of computers, what we have today is much smaller yet way more powerful than the very first computing machine.

If we have to compare, we are actually comparing “non-deep” or classic machine learning to deep learning most of the time.

People usually refer to ML as a technology that still needs a lot of human intervention, while DL is more advanced and a smarter piece of technology that has much higher level of learning ability.

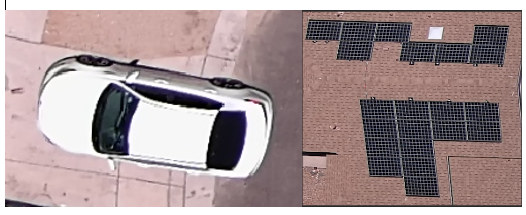

This is how I understand it. Take identifying solar panels and cars from drone images as an example.

If I’m applying an ML algorithm, I’m trying to give the computer a step-by-step guide. First, I will feed a lot of images labelled “cars” and “solar panels”. Then, I’m also telling the computers some features that I know. For example:

- Cars usually have multiple colours, while solar panels are mainly dark.

- Cars have round corners or wavy edges, while solar panels consist of relatively uniform rectangular units.

- Cars are just above the ground, while solar panels are usually on an elevated object.

Note that this is a very conceptualised explanation, in reality, you need to transform this information into mathematical models such as a linear regression model or other types of calculations.

Deep learning, on the other hand, is capable of extracting these features by itself. It aims to mimic human neural networks (that’s exactly why their backbones are called artificial neural networks).

This time, after feeding the DL algorithm the same dataset, I simply back off and let the algorithm learn by itself. I give it lots of cars and tell the machine only that they are cars, but it can figure out for itself what makes a car look like a car. It looks like a black box that no one knows how it works before seeing the output result.

It is usually considered that a DL model needs a much larger dataset to obtain a good result, yet the small requirement of human intervention shows its huge potential in the future.

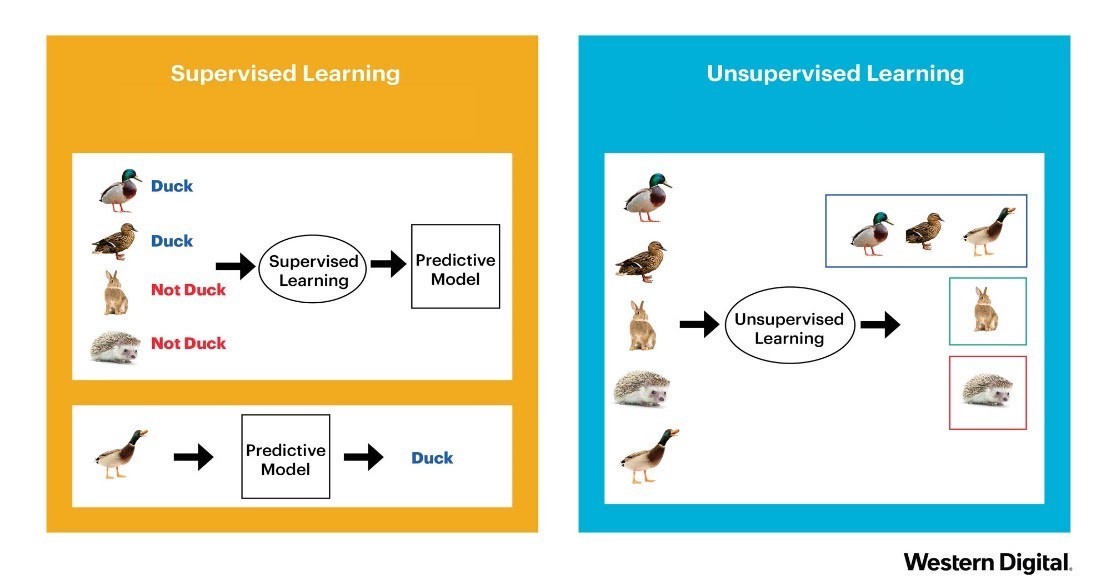

Supervised learning vs unsupervised learning

The last concept is a very simple concept, but still worth noting if you want to know more about machine learning.

In both ML and DL examples I mentioned above, labelled images were used. In other words, the input dataset is ‘supervised’ by a human since we are telling the computer from the beginning what is a car, what is a solar panel. This is called supervised learning.

What if it is something we don’t even know how to differentiate, which means we are unable to label them. Unsupervised learning is a method that doesn’t need the input dataset to be labelled.

We can then use either classic ML algorithms or state-of-the-art DL models to classify and group similar objects together. Humans can then examine the grouped results and then add labels to those groupings.

It is definitely harder to tell what is good learning and what is not in unsupervised learning. But it is very helpful in finding patterns in data.

I hope after explaining the three concepts of machine learning drones today, you can start to understand how this new technology is applied within the drone industry.